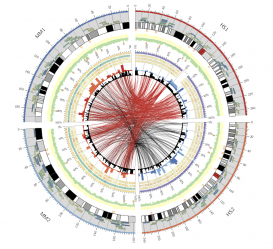

Biology has been dealing with the problems of "big data" for several decades. Even before the Human Genome Project began, biologists struggled to cope with rapidly accumulating protein and DNA sequence data. My project examined the recent history of data in biology, paying particular attention to the "infrastructures" (hardware, software, databases, data structures) that make data-work possible. For instance, the work of assembling a human genome from hundreds of thousands of small sequenced fragments required not only massive computational power but also software capable of dealing with large, messy, and heterogeneous data sets. What sorts of knowledge and practices are required to perform such work, and in what ways do they differ from non-computational work in biology?

In exploring such examples, I aimed to highlight some of the novelties of big data and big data practices. This novelty has less to do with size and more to do with how data are manipulated and used within computer-based infrastructures. I suggested that this novelty demands new methods for studying data that allows us to follow it inside machines, software, and databases—that is, we need to supplement material culture approaches with "data culture" approaches. Ultimately, such analysis will help us to understand the manifold consequences of "big data" as it moves from science into a range of other social, economic, and political domains.